What the heck is a formal process? Is it in the category of you know it when you see it?

What the heck is a formal process? Is it in the category of you know it when you see it?

A formal process is a methodical way of tackling any repetitive process so that it can be handled in a standardized way. The idea of formality is to reduce variation in output and minimize the chance of forgetting something. Formal processes include the ideas of studying, planning, measurement, and process refinement; they cost more and take longer than just just jumping in and getting things done.

Imagine if they tried to make cars in an informal way… would you drive them?

Informal, or not formal processes, are faster and cheaper because you get rid of the overhead of a formal process. It makes sense to be informal when the variation of the process output is not very sensitive to studying, planning, measurement, or refinement.

Informal, or not formal processes, are faster and cheaper because you get rid of the overhead of a formal process. It makes sense to be informal when the variation of the process output is not very sensitive to studying, planning, measurement, or refinement.

We are all familiar with trying to use formality when it makes no sense, you simply end up with process for processes sake and waste time and money.

In software, formality has become synonymous with documentation, and frankly we all hate documentation. We don’t like to produce it and we don’t like to read it. I’ve instructed hundreds of engineers and only a few people bother to read the requirements brick. The way education is going right now I’m not even sure that university graduates can read anyways, but I digress… 🙂

For example, documentation for requirements, design, and testing is the end result of a formal process, it is not the formal process itself. It is smart not to produce more documentation than you need, but that does not mean that the formal processes that creates the documentation are optional.

For example, documentation for requirements, design, and testing is the end result of a formal process, it is not the formal process itself. It is smart not to produce more documentation than you need, but that does not mean that the formal processes that creates the documentation are optional.

Formal processes in software development cluster around two very simple ideas:

- getting the correct requirements

- elimination of defects

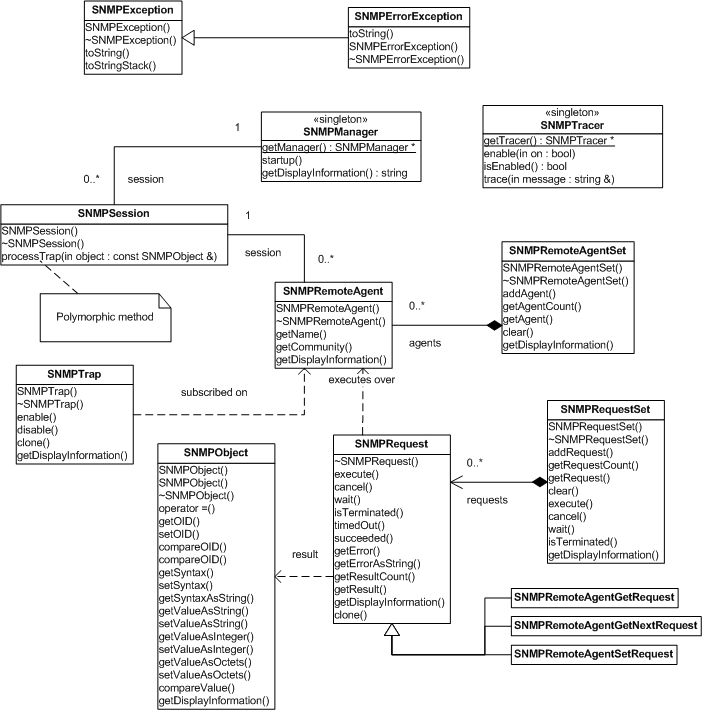

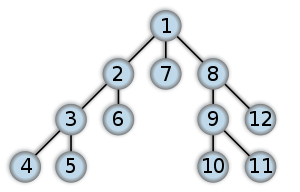

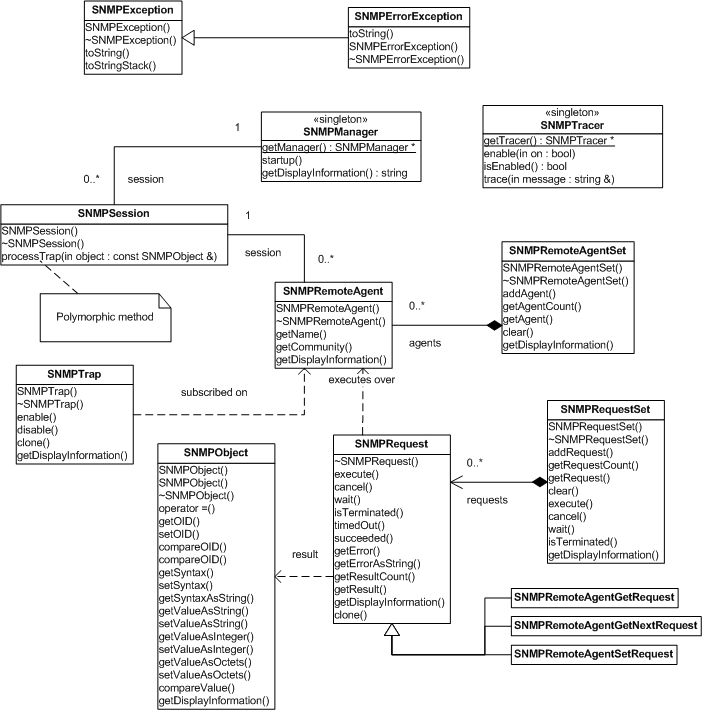

The problem is that formal processes only work when you do enough of them to make a difference. That is doing too little of a formal process is just as bad as doing too much. For example, UML class diagrams will not only help you to plan code but also educate new developers on the team.

The problem is that formal processes only work when you do enough of them to make a difference. That is doing too little of a formal process is just as bad as doing too much. For example, UML class diagrams will not only help you to plan code but also educate new developers on the team.

Producing UML diagrams is a formal practice; however, if you insist on producing UML diagrams for everything then you will end up spending a significant amount of time creating the diagrams to get a diminishing benefit. Not having any UML diagrams will lead to code being developed more than once simply because no one has a good overview of the system.

Selective UML diagrams can really accelerated your development

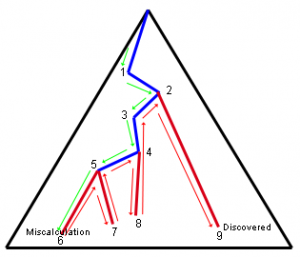

Visualizing Formality

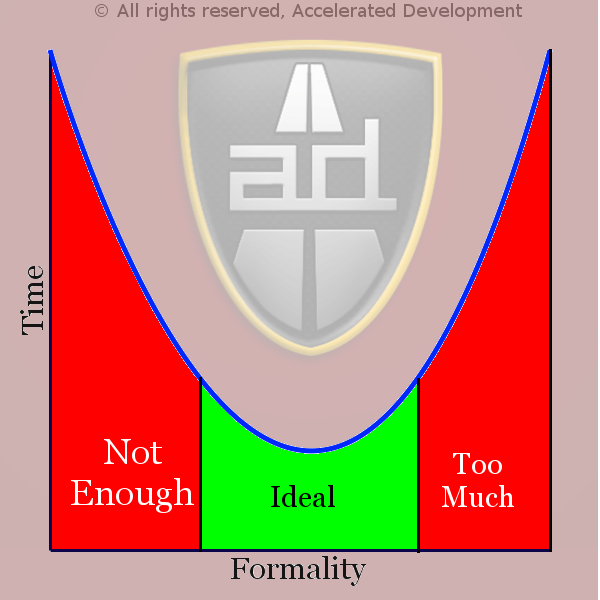

As you increase any formal practice the effect of that practice will increase, for example the more formal you are with UML diagrams the less defects you are likely to have:

However, as you increase formality, the costs of keeping track of all your artifacts goes up:

When you put the two graphs together you get this effect:

This shows that there is a cost to not having enough formality, i.e. no UML diagrams means that more code will get developed and less reuse means more defects. However, producing too many UML diagrams will also have the cost of producing the UML diagrams and keeping them updated. The reality is that for any formal practice there is a sweet spot where the minimal formality gives the lowest cost.

What that means is that productivity will be maximized in the sweet spot.

Remember that most formal practices are hygiene practices, these are things that no one wants to do but are really necessary to be highly productive and produce high quality code (see here for more on hygiene practices)

Statistics Behind Formal / Informal Practices

Formal practices can be applied to many things, but Capers Jones has measured the following formal practices (when properly executed) can have a positive effect on a project (15,000+ projects):

Formal risk management can raise productivity by 17.8% and quality by 26.4%

Formal measurement programs can raise productivity by 20.0% and quality by 30.0%

Formal test plans can raise productivity by 16.6% and quality by 24.1%

Formal requirements analysis can raise productivity by 16.3% and quality by 23.2%

Formal SQA teams can raise productivity by 15.2% and quality by 20.5%

Formal scope management can raise productivity by 13.5% and quality by 18.5%

Formal project office can raise productivity by 11.8% and quality by 16.8%

A special category of formal practices are inspections:

Formal code inspections can raise productivity by 20.8% and quality by 30.9%

Formal requirements inspections can raise productivity by 18.2% and quality by 27.0%

Formal design inspections can raise productivity by 16.9% and quality by 24.7%

Formal inspection of test materials can raise productivity by 15.1% and quality by 20.2%

More information on inspections can be found here:

Not being formal enough has its costs, and it is rarely neutral. Here are some of the costs of not being formal enough:

Informal progress tracking can lower productivity by 0.5% and quality by 1.0%

Informal requirements gathering can lower productivity by 5.7% and quality by 8.4%

Inadequate risk analysis can lower productivity by 12.5% and quality by 17.5%

Inadequate testing can lower productivity by 13.1% and quality by 18.1%

Inadequate measurement of quality can lower productivity by 13.5% and quality by 18.5%

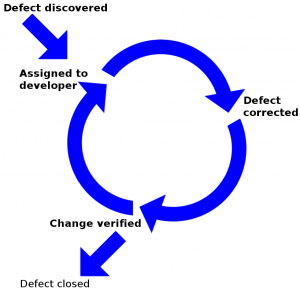

Inadequate defect tracking can lower productivity by 15.3% and quality by 20.9%

Inadequate inspections can lower productivity by 16.0% and quality by 22.1%

Inadequate progress tracking can lower productivity by 16.0% and quality by 22.5%

N.B. progress tracking is on the list twice. Informal progress tracking is fairly neutral, but inadequate progress tracking is deadly.

Conclusion

In development practices it is not a matter of formal vs informal. It is a matter of seeing what kind of variation that you are exposed to and recognizing that formality can make a difference in that area. Then it is a matter of only adding as much formality as you need to get maximum productivity.

Formal practices must be monitored, you must have some way of testing if the formal practice is having an effect on your development. You want to make sure that you are executing all formal practices properly and not just going through the motions of a formal practice with no effect. You must monitor the effects of formality to understand when you are not doing enough and when you are doing too much!

Articles in the “Loser” series

| Want to see sacred cows get tipped? Check out:

|

|

|

Moo? |

Make no mistake, I am the biggest “Loser” of them all. I believe that I have made every mistake in the book at least once 🙂

VN:F [1.9.22_1171]

Rating: 0.0/5 (0 votes cast)

VN:F [1.9.22_1171]

Surprisingly, defect priority should not be set by QA. QA are generally the owners of the defect tracking system and control it, but this is one attribute that they should not control. The defect tracker is a shared resource between QA, engineering, engineering management, and product mangement and is a coordinating mechanism for all these parties.

Surprisingly, defect priority should not be set by QA. QA are generally the owners of the defect tracking system and control it, but this is one attribute that they should not control. The defect tracker is a shared resource between QA, engineering, engineering management, and product mangement and is a coordinating mechanism for all these parties. It is common for new releases to have various installation problems when it initially gets to QA. This blocks QA so they mark the defects with a high severity and high priority. This issue has a high severity and needs to be addressed right away, but remember bug tracking systems are append only — once this defect gets into the system, it will never get out. This kind of issue should be escalated to the engineers and engineering management because it makes little sense to clog the defect tracking system with it.

It is common for new releases to have various installation problems when it initially gets to QA. This blocks QA so they mark the defects with a high severity and high priority. This issue has a high severity and needs to be addressed right away, but remember bug tracking systems are append only — once this defect gets into the system, it will never get out. This kind of issue should be escalated to the engineers and engineering management because it makes little sense to clog the defect tracking system with it. Similarly, there may be many cosmetic or minor defects where fixing them might make a huge difference in the user experience and reduce support calls. Even though these defects are minor, they may be easy to fix and save you serious money. Once again, this can not be decided by QA.

Similarly, there may be many cosmetic or minor defects where fixing them might make a huge difference in the user experience and reduce support calls. Even though these defects are minor, they may be easy to fix and save you serious money. Once again, this can not be decided by QA.

As development progresses we inevitably run into functionality gaps that are either deemed as

As development progresses we inevitably run into functionality gaps that are either deemed as

Enhancements may or may not become code changes. Even when enhancements turn into code change requests they will generally not be implemented as the developer or QA think they should be implemented.

Enhancements may or may not become code changes. Even when enhancements turn into code change requests they will generally not be implemented as the developer or QA think they should be implemented. The creation of requirements and test defects in the bug tracker goes a long way to cleaning up the bug tracker. In fact, requirements and test defects represent about 25% of defects in most systems (see

The creation of requirements and test defects in the bug tracker goes a long way to cleaning up the bug tracker. In fact, requirements and test defects represent about 25% of defects in most systems (see

The only possible conclusion is that senior management can’t conceive of a their projects failing. They must believe that every software project that they initiate will be successful, that other people fail but that they are in the 3 out of 10 that succeed.

The only possible conclusion is that senior management can’t conceive of a their projects failing. They must believe that every software project that they initiate will be successful, that other people fail but that they are in the 3 out of 10 that succeed.

The best way to solve all 3 issues is through formal planning and development.Two methodologies that focus directly on planning at the personal and team level are the

The best way to solve all 3 issues is through formal planning and development.Two methodologies that focus directly on planning at the personal and team level are the  Therefore, complexity in software development is about making sure that all the code pathways are accounted for. In increasingly sophisticated software systems the number of code pathways increases exponentially with the call depth. Using formal methods is the only way to account for all the pathways in a sophisticated program; otherwise the number of defects will multiply exponentially and cause your project to fail.

Therefore, complexity in software development is about making sure that all the code pathways are accounted for. In increasingly sophisticated software systems the number of code pathways increases exponentially with the call depth. Using formal methods is the only way to account for all the pathways in a sophisticated program; otherwise the number of defects will multiply exponentially and cause your project to fail.

Unfortunately, weak IT leadership, internal politics, and embarrassment over poor estimates will not move the deadline and teams will have pressure put on them by overbearing senior executives to get to the original deadline even though that is not possible.

Unfortunately, weak IT leadership, internal politics, and embarrassment over poor estimates will not move the deadline and teams will have pressure put on them by overbearing senior executives to get to the original deadline even though that is not possible. Work executed on these activities will not advance your project and should not be counted in the total of completed hours. So if 2,000 hours have been spent on activities that don’t advance the project then if 9,000 hours have been done on a 10,000 hour project then you have really done 7,000 hours of the 10,000 hour project and

Work executed on these activities will not advance your project and should not be counted in the total of completed hours. So if 2,000 hours have been spent on activities that don’t advance the project then if 9,000 hours have been done on a 10,000 hour project then you have really done 7,000 hours of the 10,000 hour project and

Somehow some developers have interpreted this as meaning that there are no formal processes to be followed. But just because you are putting the priority on working software does not mean that there can be no documentation; just because you are responsive to change doesn’t mean that there is no plan.

Somehow some developers have interpreted this as meaning that there are no formal processes to be followed. But just because you are putting the priority on working software does not mean that there can be no documentation; just because you are responsive to change doesn’t mean that there is no plan.

After execution,

After execution,