The Unified Modeling Language (UML) was adopted as a standard by the OMG in 1997, almost 20 years ago. But despite its longevity, I’m continually surprised at few organizations actually use it.

The Unified Modeling Language (UML) was adopted as a standard by the OMG in 1997, almost 20 years ago. But despite its longevity, I’m continually surprised at few organizations actually use it.

Code is the ultimate model for software, but it is like the trees of a forest.  You can see a couple, but only few people can see the entire forest by just looking at the code. For the rest of us, diagrams are the way to see the forest, and UML is the standard for diagrams.

You can see a couple, but only few people can see the entire forest by just looking at the code. For the rest of us, diagrams are the way to see the forest, and UML is the standard for diagrams.

They say, “A picture is worth a thousand words“, and this is true for code; even on a large monitor you can only see so many lines of code. Every other engineering discipline has diagrams for complex systems, e.g. design diagrams for airplanes, blueprints for buildings. In fact, the diagrams need to be created and approved BEFORE the airplane or building is created.

Contrast that with software where UML diagrams are rarely produced, or if they are produced, they are produced as an after thought. The irony is that the people pushing to build the architecture quickly say that there is no time to make diagrams, but they are the first people to complain when the architecture sucks. UML is key to planning (see Not planning is for losers)

Contrast that with software where UML diagrams are rarely produced, or if they are produced, they are produced as an after thought. The irony is that the people pushing to build the architecture quickly say that there is no time to make diagrams, but they are the first people to complain when the architecture sucks. UML is key to planning (see Not planning is for losers)

I think this happens because developers, like all people, are focused on what they can see and touch right now. It is easier to try to code a GUI interaction or tackle database update problems than it is to work at an abstract level through the interactions that are taking place from GUI to database.

Yet this is where all the architecture is. Good architecture makes all the difference in medium and large systems. Architecture is the glue that holds the software components in place and defines communication through the structure. If you don’t plan the layers and modules of the system then you will continually be making compromises later on.

Yet this is where all the architecture is. Good architecture makes all the difference in medium and large systems. Architecture is the glue that holds the software components in place and defines communication through the structure. If you don’t plan the layers and modules of the system then you will continually be making compromises later on.

In particular, medium to large projects (>10,000 function points) are at a very high risk of failure if you don’t consider the architectural issues. Considering only 3 out of 10 software projects are successful only a fool would skip planning the architecture (see Failed? You get what you deserve!)

Good diagrams, in particular UML, allow you to abstract away all the low level details of an implementation and let you focus on planning the architecture. This higher level planning leads to better architecture and therefore better extensibility and maintainability of software.

Good diagrams, in particular UML, allow you to abstract away all the low level details of an implementation and let you focus on planning the architecture. This higher level planning leads to better architecture and therefore better extensibility and maintainability of software.

If you are a good coder then you will make a quantum leap in your ability to tackle large problems by being able to work through abstractions at a higher level. How often do we find ourselves unable to implement simple features simply because the architecture doesn’t support it?

Well the architecture doesn’t support it because we spend very little time developing the blueprint for the architecture of the system.

UML diagrams need to be produced at two levels:

- the analysis or ‘what’ level

- the design or ‘how’ level

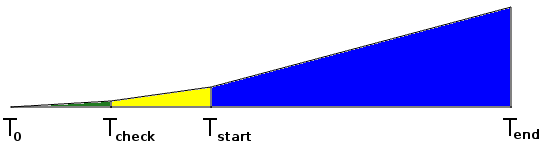

Analysis UML diagrams (class, sequence, collaboration) should be produced early in the project and support all the requirements. Ideally you use a requirements methodology that allows you to trace easily from the requirements onto the diagrams.

Analysis diagrams do not have implementation classes on them, i.e. no vendor specific classes. The goal is to identify how the high level concepts (user, warehouse, product, etc) relate to each other.

These analysis level UML diagrams will help you to identify gaps in the requirements before moving to design. This way you can send your BAs and product managers back to collect missing requirements when you identify missing elements before you get too far down the road.

These analysis level UML diagrams will help you to identify gaps in the requirements before moving to design. This way you can send your BAs and product managers back to collect missing requirements when you identify missing elements before you get too far down the road.

Once the analysis diagrams validate that the requirements are relatively complete and consistent, then you can create design diagrams with the implementation classes. In general the analysis diagrams are one to many to the design diagrams.

Since you have validated the architecture at the analysis level, you can now do the design level without worrying about compromising the architectural integrity. Once the design level is complete you can code without compromising the design level.

When well done the analysis UML, design UML, and code are all in sync. Good software is properly planned and executed from the top down. It is mentally tougher to create software this way, but the alternative is continuous patches and never ending bug-fix cycles.

So remember the following example from Covey’s The 7 Principles of Highly Effective People:

You enter a clearing where a man is furiously sawing at a large log, but he is not making any progress. You notice that the saw is dull and is unable to cut the wood, so you say, “Hey, if you sharpen the saw then you will saw the log faster”. To which the man replies, “I don’t have time, I’m too busy sawing the log”.

Don’t be the guy sawing with a dull

UML is the tool to sharpen the saw, it does take time to learn and apply, but you will save yourself much more time and be much more successful.

Bibliography

- Covey, Stephen. The 7 Habits of Highly Effective People

- OMG, Unified Modeling Language™ (UML®) Resource Page

When executives

When executives

One of the most fundamental issues that organizations wrestle with is

One of the most fundamental issues that organizations wrestle with is  Like defect tracking systems most developers have learned that version control is a necessary

Like defect tracking systems most developers have learned that version control is a necessary  Sorry Version One and JIRA, the simple truth is that using an Agile tool does not make you agile, see

Sorry Version One and JIRA, the simple truth is that using an Agile tool does not make you agile, see  I have written extensively about why debuggers are not the best tools to track down defects. So I’ll try a different approach here.

I have written extensively about why debuggers are not the best tools to track down defects. So I’ll try a different approach here. There is definitely a large set of developers that assume that using a

There is definitely a large set of developers that assume that using a  Learning tools is not a

Learning tools is not a

This means that the base rate of success for any software project is only 3 out of 10.

This means that the base rate of success for any software project is only 3 out of 10. When there is a

When there is a  Requirements uncertainty is what leads to

Requirements uncertainty is what leads to  Technical uncertainty exists when it is not clear that all requirements can be

Technical uncertainty exists when it is not clear that all requirements can be  Skills uncertainty comes from using resources that are unfamiliar with the requirements or the implementation technology. Skills uncertainty is a

Skills uncertainty comes from using resources that are unfamiliar with the requirements or the implementation technology. Skills uncertainty is a  An informal business case is possible only if the requirements, technical, and skills uncertainty is low. This only happens in a few situations:

An informal business case is possible only if the requirements, technical, and skills uncertainty is low. This only happens in a few situations: Here is a list of projects that tend to be accepted without any kind of real business case that quantifies the uncertainties:

Here is a list of projects that tend to be accepted without any kind of real business case that quantifies the uncertainties:

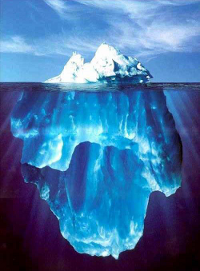

So when you consider launching a software project you must understand that you are looking at the tip of the iceberg. Your ability to handle uncertainty will dictate how successful you will be.

So when you consider launching a software project you must understand that you are looking at the tip of the iceberg. Your ability to handle uncertainty will dictate how successful you will be. Unfortunately the way most organizations develop software resembles the

Unfortunately the way most organizations develop software resembles the

Thus it is that in war the victorious strategist seeks battle after the victory has been won, whereas he who is destined to defeat first fights and afterwards looks for victory in the midst of the fight.

Thus it is that in war the victorious strategist seeks battle after the victory has been won, whereas he who is destined to defeat first fights and afterwards looks for victory in the midst of the fight.  That the impact of your army may be like a grindstone dashed against an egg—this is effected by the science of weak points and strong

That the impact of your army may be like a grindstone dashed against an egg—this is effected by the science of weak points and strong

places and hastens downwards. So in war, the way is to avoid what is strong and to strike at what is weak.

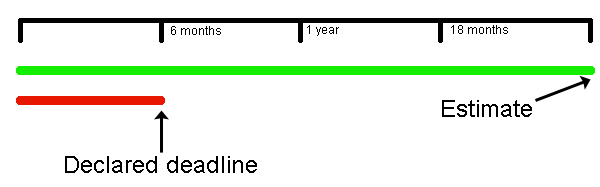

places and hastens downwards. So in war, the way is to avoid what is strong and to strike at what is weak. When organizations bite off more than they can chew they exert tremendous pressures on the team resources to work extended hours to make deadlines that are often unrealistic. In the pressure cooker you can expect key personnel to defect and put you into a worse position. How many times have you found yourself on a

When organizations bite off more than they can chew they exert tremendous pressures on the team resources to work extended hours to make deadlines that are often unrealistic. In the pressure cooker you can expect key personnel to defect and put you into a worse position. How many times have you found yourself on a